Why Your B2B Content Stopped Bringing Clicks

Your rankings are stable. Your traffic isn't. The reason is the same one Google just named publicly, and most B2B sites have it.

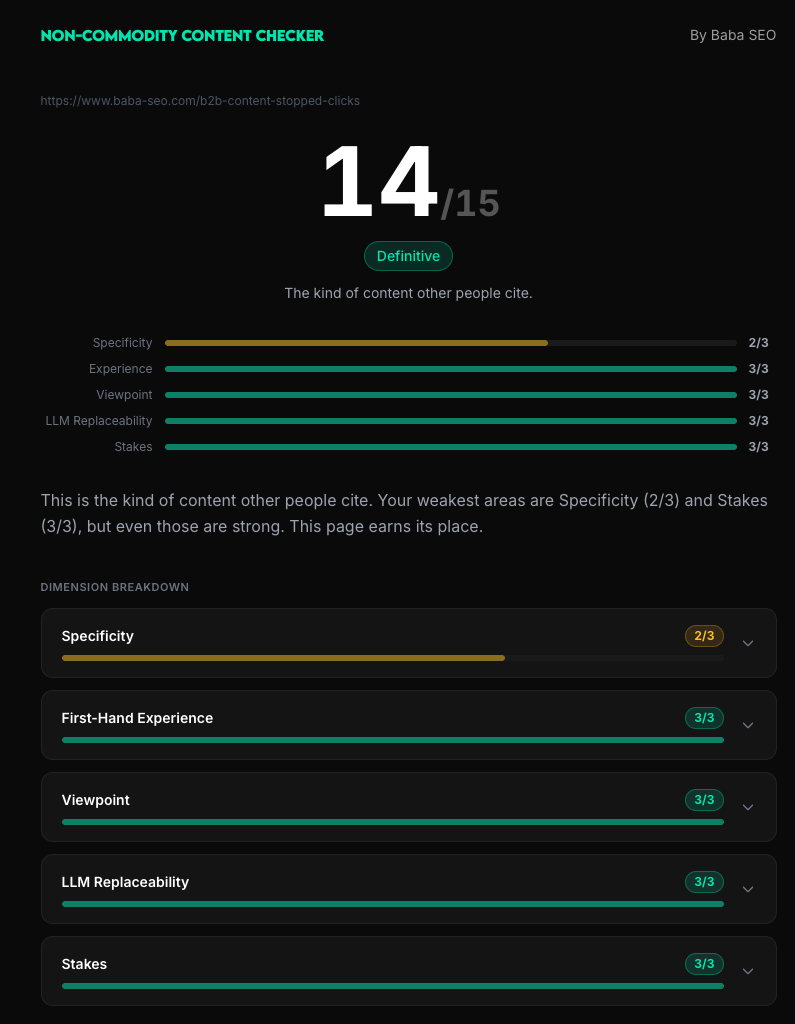

By Baba Hausmann · Non-Commodity Score: 14/15 · Last updated April 2026

Rankings up, clicks down

If you run SEO for a B2B company, you've probably seen this in your last quarter's data. Your commercial pages still rank where they used to. Some of them rank better. The clicks are gone anyway.

I've seen this play out across two B2B portfolios in Q1 2026 — one in regulated financial services, one in commercial equipment. Different categories, same pattern.

Rankings: holding or improving by ~2 positions on average.

CTR: down 35% to 72% across the commercial page set.

On one portfolio, a flagship commercial page held position 1 organically on a key category query while losing more than 60% of its clicks. On the other, the highest-CPC commercial keyword in the set (€19+ CPC, real money) sat at position 30. Not because the domain couldn't rank, but because what was ranking was the wrong page.

This is not a Core Update story. Their pages didn't get demoted. The SERP got rearranged around them.

The cost of ranking on commodity content has gone from "lower CTR than you'd want" to "impressions without clicks."

What Google said about why this is happening

Google's Search Central team presented the framing publicly in April 2026. Two slides matter.

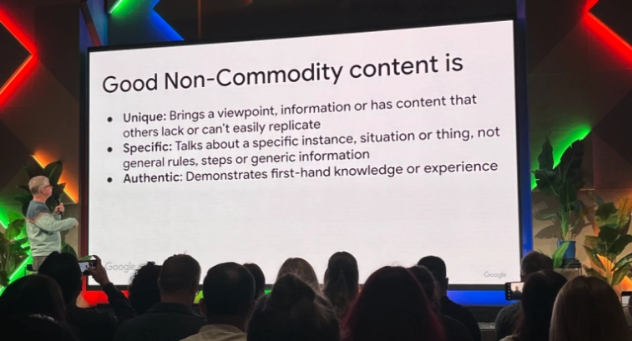

Slide 1: "Good Non-Commodity content is"

Unique: brings a viewpoint, information or has content that others lack or can't easily replicate

Specific: talks about a specific instance, situation or thing, not general rules, steps or generic information

Authentic: demonstrates first-hand knowledge or experience

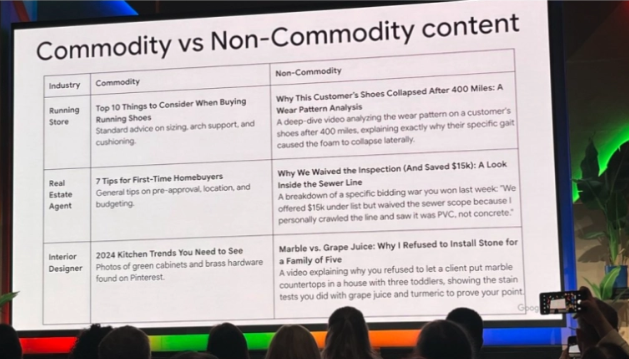

Slide 2: Commodity vs Non-Commodity

The slide compared real categories. A running store's "Top 10 Things to Consider When Buying Running Shoes" is commodity. A running store's "Why This Customer's Shoes Collapsed After 400 Miles: A Wear Pattern Analysis" is non-commodity. Same category, same expertise, totally different content.

Google didn't invent the principle here. They've been articulating some version of it for years under EEAT. What changed is they named the failure mode directly. And in the same event, they confirmed that high-volume AI-generated content is now treated as scaled content abuse — meaning commodity content isn't just being ignored, it's being penalized.

Why this matters more in 2026 than it did in 2025

Here's the mechanism most readers haven't connected yet.

AI Overviews can assemble an answer for a generic commercial query from eight commodity sources without needing any single one specifically. On one of these portfolios, a flagship product page on a category query showed an AI Overview citing eight competitors, and them zero times. Not "ranked low." Not cited. The AIO didn't need them.

Same domain on a more specific query — one where the answer is tied to a named customer story or a first-hand decision — the AIO either cites the page or doesn't render. The content that survives is the content the AIO can't compress without losing meaning.

This is the actual mechanism. AI Overviews are a commodity-content compressor. They take eight average pages saying the same thing and produce a summary that no single page beats. The pages keep their rankings. The clicks go to the summary instead.

Commodity content used to lose 20% CTR to featured snippets. Now it loses 40-60% CTR to AI Overviews. The losses compound with each update.

The same pattern shows up from a different angle on the second portfolio. Roughly 200 ranked non-brand keywords, around 10% with commercial intent. The rest are informational queries — 'best XYZ in the world,' that kind of thing — where the domain shows up but no one buys. AI Overviews ate the informational queries first, because those are the most commodity-shaped. The commercial queries followed.

The pages that survived

Inside the same domains losing clicks across most of the commercial set, some pages grew against the trend.

Generic category pages

Named customer stories (+92%)

A specific person, signed permission, a real number

Boilerplate "what is X" guides

First-hand competitor comparisons (+64%)

First-hand "why our customers switched from Competitor X"

AI-paraphrased blog posts

Editorial with named authors (+44%)

A real person taking a real position

Anonymous service pages

Sector-specific case studies (+62%)

A specific industry, a specific use case, named details

These aren't theoretical examples. They're click-rate winners pulled from real Q1 data on a domain where the commodity pages lost 35-72%.

The pattern is consistent across both clients. Pages a competitor's intern could produce with ChatGPT and a decent brief: bleeding. Pages that contain something inseparable from the brand as the source: growing.

What "non-commodity content" looks like in practice

Google's "unique, specific, authentic" framing is directionally right but too vague to audit against. The way I think about it for B2B content is five dimensions, each scored 0-3.

Specificity — Named instances, exact numbers, verifiable particulars. "We helped a customer increase deals 340% over nine months" beats "we drive significant pipeline growth." Generic numbers like "many customers" fail this dimension at zero.

First-Hand Experience — Evidence the author actually did the thing. Not "claims experience." A canonical tag bug caught on day 3 of a migration, with the specific page count it affected, is first-hand experience. "Migrations require careful planning" is not.

Viewpoint — Positions someone could disagree with. "Most SEO audits are theater" is a viewpoint. "Quality matters more than quantity" is consensus, not a viewpoint.

LLM Replaceability (inverted — high score means hard to replace) — How much an LLM with no web access could reproduce from priors. A piece on "10 SEO tips" is 95% reproducible. A piece on a specific named deal you closed last week is 5% reproducible.

Stakes — What the author is risking by publishing this. A named refusal ("we don't take clients under €50k MRR, here's why") is stakes. "It depends on your situation" is the absence of stakes.

A page scoring 0-4 on these five dimensions is commodity. 5-8 is mostly commodity. 9-11 is differentiated. 12-15 is the kind of content other people cite.

Read the full methodology, then score your own page against the five dimensions.

What to do with this if your traffic is collapsing

If your commercial pages are losing clicks while keeping rankings, the answer isn't optimization tweaks. It's not adding EEAT signals to the same commodity content. It's not "use more AI for scale." Those address symptoms, not the mechanism.

Three moves, in order.

1. Audit your worst-bleeding page first, not your best-performing one.

The pages that lost the most clicks while keeping rankings are your highest-leverage rebuilds. They have proven search demand. The only thing missing is non-commodity material. Score them against the five dimensions. The dimension scoring zero is where you start.

You can also use my free tool to help you: Commodity Content Checker

2. Build a sourcing pipeline before you write.

Non-commodity content needs raw material that doesn't exist in training data. From one of my client's playbook: one customer interview per month, signed permission, specific usage data, two or three photos. One interview per month fuels a year of non-commodity pages. The organizational lift of capturing that material is bigger than the writing itself.

3. Stop publishing anything that fails the test.

On one of these portfolios, the team killed 30+ commodity blog posts in Q1 to free up their content lead for two strategic priorities: rebuilding the highest-CPC commercial page and writing two new commercial pages where none existed. Volume-as-floor was a 2024 strategy. In 2026, every piece must answer: "what does this company know that a competitor doesn't?"

If a piece can't answer that, it doesn't enter the pipeline.